|

9/12/2023 0 Comments Auditory illusions buzzing

The requirement of VR developers and programmers to produce virtual auditory scenes to accompany the visual elements (within the strict computational constraints imposed by available technology) is a unique driver to this field of research. The relevance of the work has also expanded as virtual reality (VR) has developed. It is clear that complex neural computation is at work to process the various cues, so discoveries in this field have the potential to impact on other areas of neural computation. This research is important for understanding the basic concepts of how we perceive and process dynamic auditory cues, though this relatively simple premise has proven difficult to tease out in practice.

Sound source localization requires the interaction of two sets of cues, one based on auditory spatial cues and another that indicates the position of the head The second is concerned with cues used to determine the position of the head (or body, or eyes) when listeners and/or sound sources move, and listeners are asked to judge where a sound is coming from. The first involves experiments examining the role of auditory spatial cues and their interaction with head-position cues when listeners and/or sound sources move.

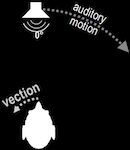

To test this hypothesis the team are using two interacting approaches. Both sets of cues are required for the brain to determine the location of sound sources in the everyday world.” He believes, based on research in vision, that, “sound source localization requires the interaction of two sets of cues, one based on auditory spatial cues and another that indicates the position of the head (or body, or perhaps the eyes). Prof Yost has picked up where Wallach left off, having already identified these features independently before discovering Wallach’s earlier work. Wallach reasoned that, “Two sets of sensory data enter into the perceptual process of localization, (1) the changing binaural cues and (2) the data representing the changing position of the head.” Much of Wallach’s research was largely forgotten with the outbreak of war in Europe, and his experiments only recently reproduced, but modern researchers in sound source localization (such as Prof Yost) are coming to understand the importance of the observations he made. RMS error for cochlear implant listeners for high-frequency stimuli is approximately 29°. Root-mean-squared (RMS) localization error in the frontal hemifield for normal hearing listeners for all broadband stimuli was measured, using many more loudspeakers than six, as approximately 6°. Schematic illustration of the experimental apparatus. This correlates with another feature known as front-back confusions, where a sound located behind an observer can appear to emanate from in front, and vice versa when the head remains stationary.įigure 1. If the sound source is rotated at twice the speed of the individual’s head, the sound is perceived as stationary originating from the opposite position from which it started. In 1940, Hans Wallach described an auditory illusion which could be created by rotating the listener and the sound source in the same plane. Professor Yost’s research is focussed on understanding how the auditory system determines that a stationary sound source does not move when listener movement causes the auditory spatial cues to change. In the everyday world, however, this is not the case: a stationary sound source is not perceived as moving when a listener moves. This means, when listeners move, the auditory spatial cues would indicate that the sound source moved, even if it was stationary. However, these cues change based on the movement of either the sound source and/or the listener. The subtle differences in sound received at each ear, known as auditory spatial cues, are used for locating sound sources. In the everyday world listeners and sound sources move, which presents a challenge for localising sound sources. Building on work that started in the early 1940s but has seen little in the way of progress until now, the research group are pioneering novel methods to probe this complex area of neural computation. Professor William Yost, and colleagues at Arizona State University, are investigating how the brain processes auditory stimuli to accurately locate a sound.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed